Publication Rates

Motivation and Takeaways

Our research on the leaky pipeline in Linguistics indicates that women are advancing in the field at disproportionately lower rates than men. There are many potential reasons for the pattern that we have observed, since many different factors influence advancement from undergraduate to graduate to early and then later faculty positions. Because publication rate is one metric that influences advancement, in this project we investigate potential gender bias in publication rates across sub-fields of Linguistics.

Simulations from Martell, Lane, & Emrich (1996) show that even with equal rates of representation at the entry level of a hierarchical organization, just one percent variance due to gender bias in the performance score that determines advancement will quickly propagate upwards so that only 35% of employees at the top level of the organization are women. In the same way, even very subtle gender bias in publication rates could amount to larger effects on promotion rates.

From the data we have collected, we conclude that women do indeed publish less than would be expected given their representation, in some sub-fields. This is evident both historically and currently. Determining causation for these trends is very difficult, but we are exploring various factors that might contribute. One obvious issue is that without data relating to submission rates to journals, we can’t determine whether women are simply submitting less or are submitting at the same rate but seeing their submissions advance to publication at a lower rate. More details and discussion will be made available in a paper currently in preparation.

Data

In all plots shown below, the black lines indicate our representation estimate, and publication rates are shown as bars reflecting deviation from the representation estimate. When women are publishing less than would be expected given their representation estimate, this is indicated with an orange bar. When women are publishing more than would be expected given their representation estimate, this is indicated with a blue bar. Our methods are described in more detail at the bottom of this write-up.

Note that in general when we discuss bias, or over-/under-representation, we simply mean deviation from the expected proportion of 50%. When we discuss over- or under-publication, we mean that the proportion of woman-authored publications deviates from our estimated representation of women.

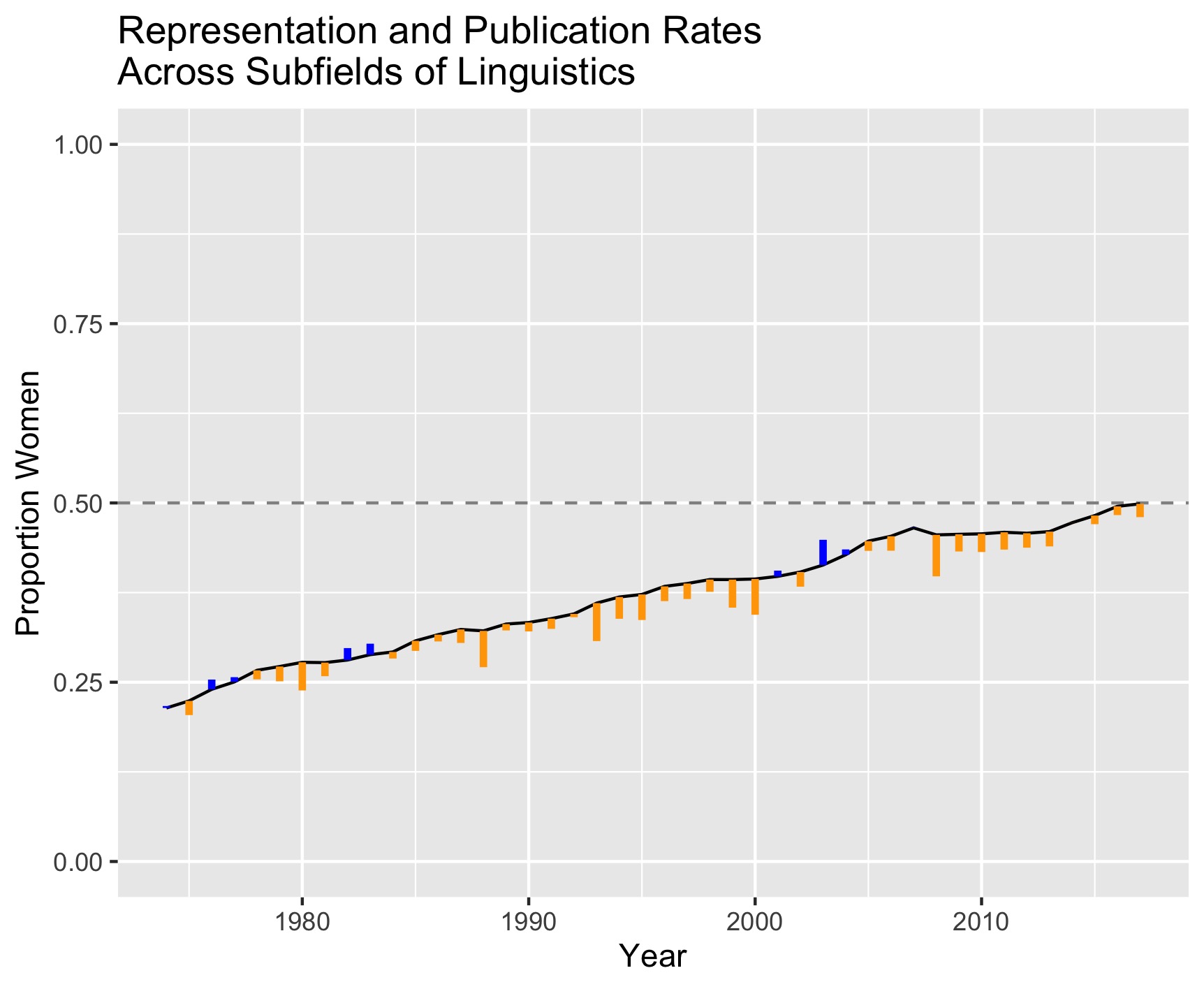

Across all subfields

We first plot all of our data at once, to give a full portrait of the field over time, although we expect that representation and publication rates could vary quite a bit by sub-field.

The first thing to note here is that women have been severely underrepresented historically, but that this has been steadily improving. In recent years Linguistics (as reflected in our sample of journals) seems to have reached representational parity, but there are still many more orange bars than blue bars, indicating that overall women are publishing at a lower rate than men. Also note that our leaky pipeline dataset shows an equal gender balance when we collapse over career stage. So it is possible to see the overall representation estimate hit 50% at the same time that women are underrepresented as faculty.

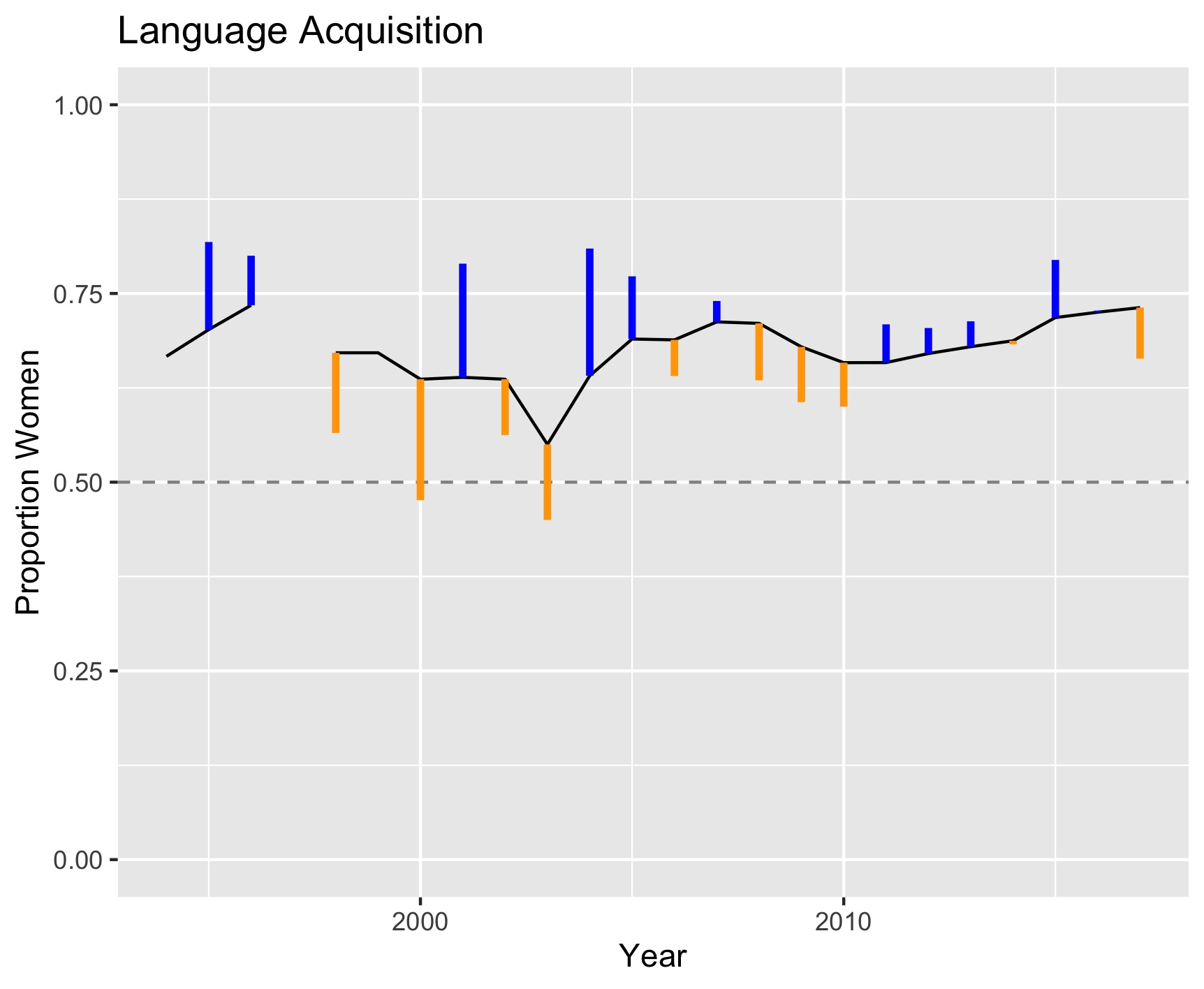

Acquisition

Breaking the data down by sub-field, we first consider language acquisition. This data does not go back as far in time as some of the other sub-fields.

Women are actually over-represented in this sub-field, but independent of this, there seems to be only random variation in whether women publish more or less than would be expected given their representation. We conclude that there does not seem to be evidence for systematic publication bias in Acquisition.

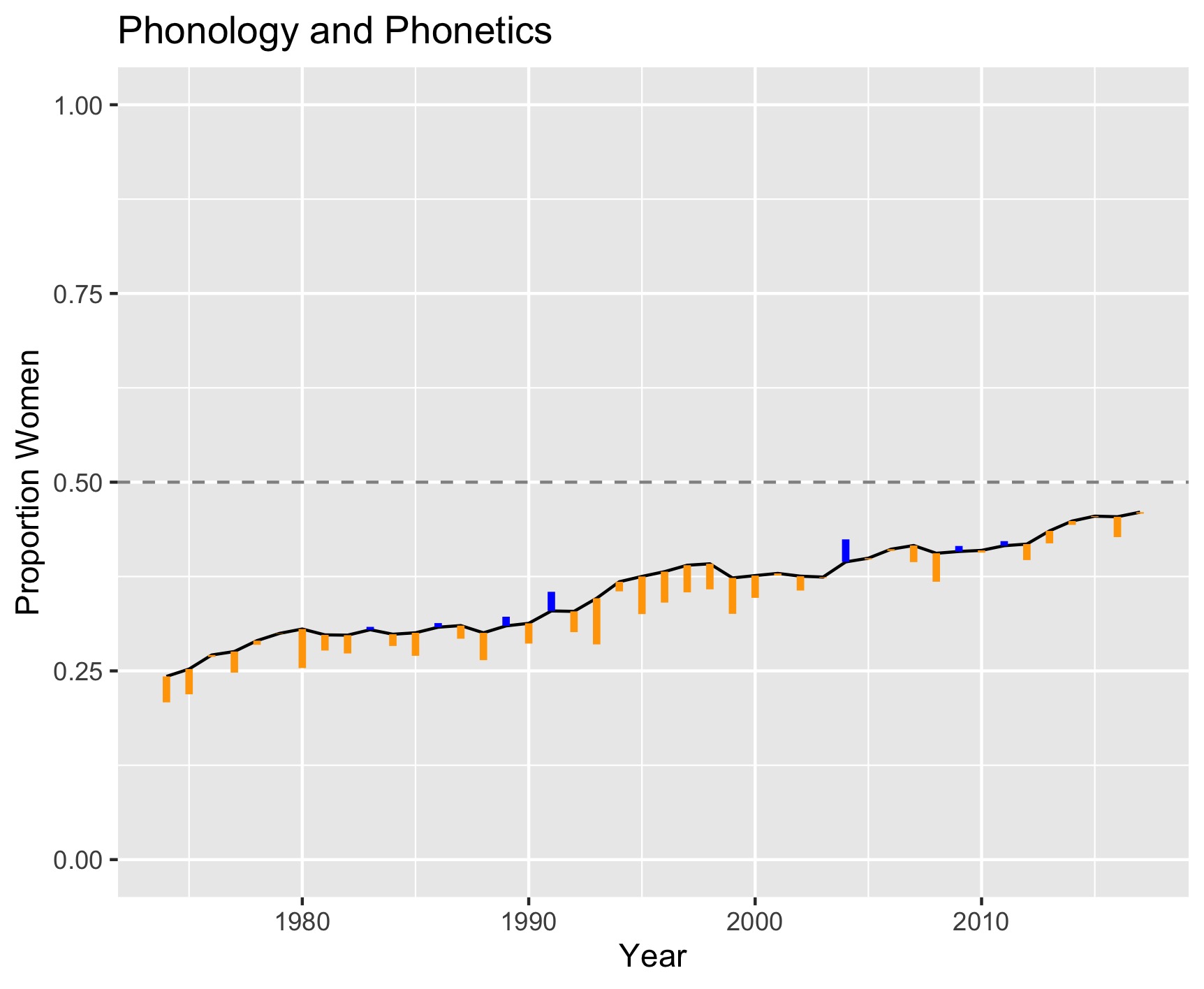

Phonology/Phonetics

This is our sub-field with the most data (on average, 717 cases per year), so our estimates are more precise here, and go back to 1970.

In Phonology/Phonetics, we see extremely consistently over time that women publish less than we would expect given their representation, but this may be improving in recent years. We also see that representation is only recently approaching 50%.

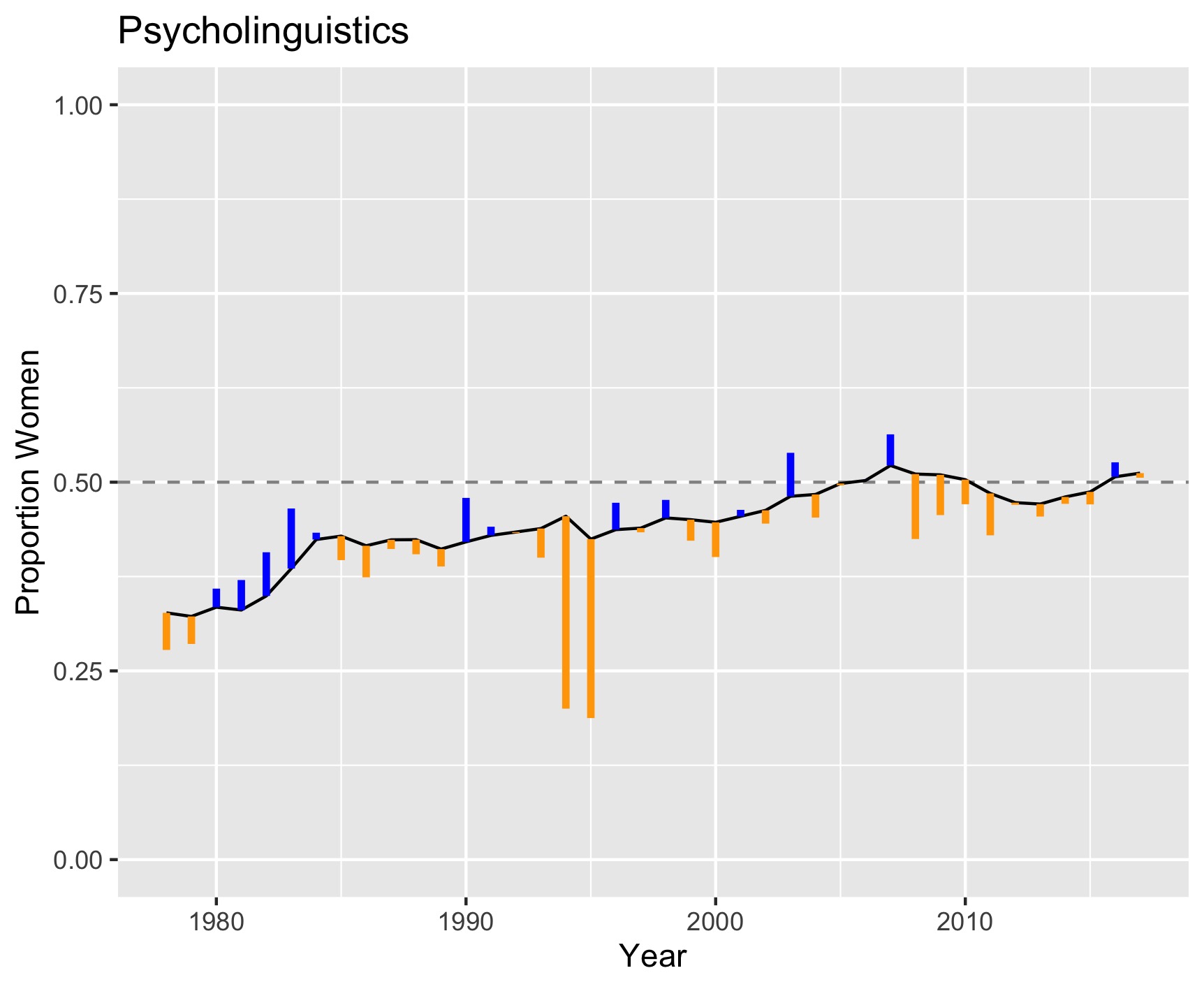

Psycholinguistics

In Psycholinguistics, representation appears to have reached parity around 2005.

Though there are periods of both over- and under-publishing, in the last 10 years it appears that women are more consistently under-publishing, which is a trend we will be careful to follow.

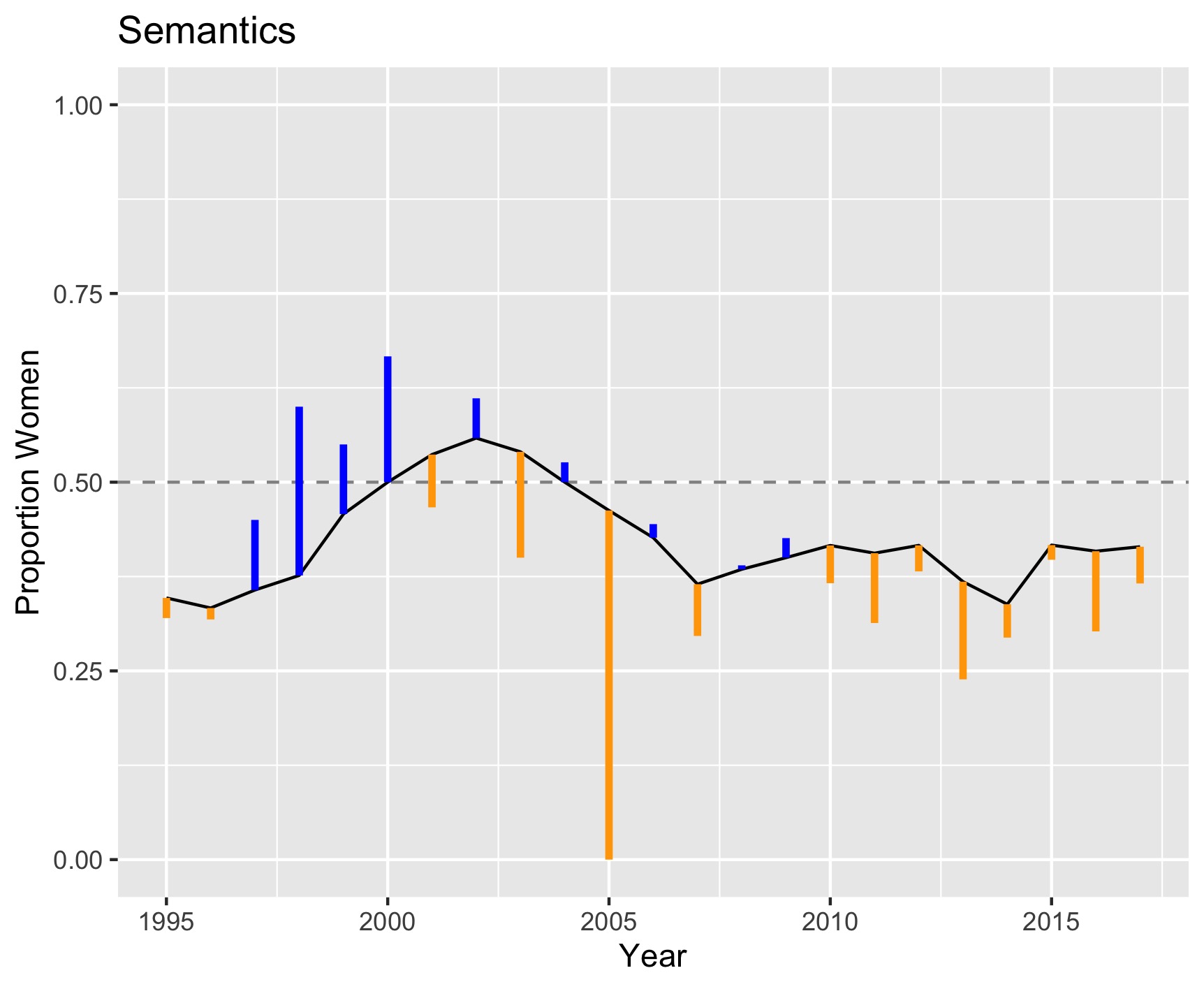

Semantics

Representation in Semantics has been variable, but women are consistently under-represented in the last 15 years, and representation does not seem to be increasing.

In addition to this under-representation, women are consistently under-publishing.

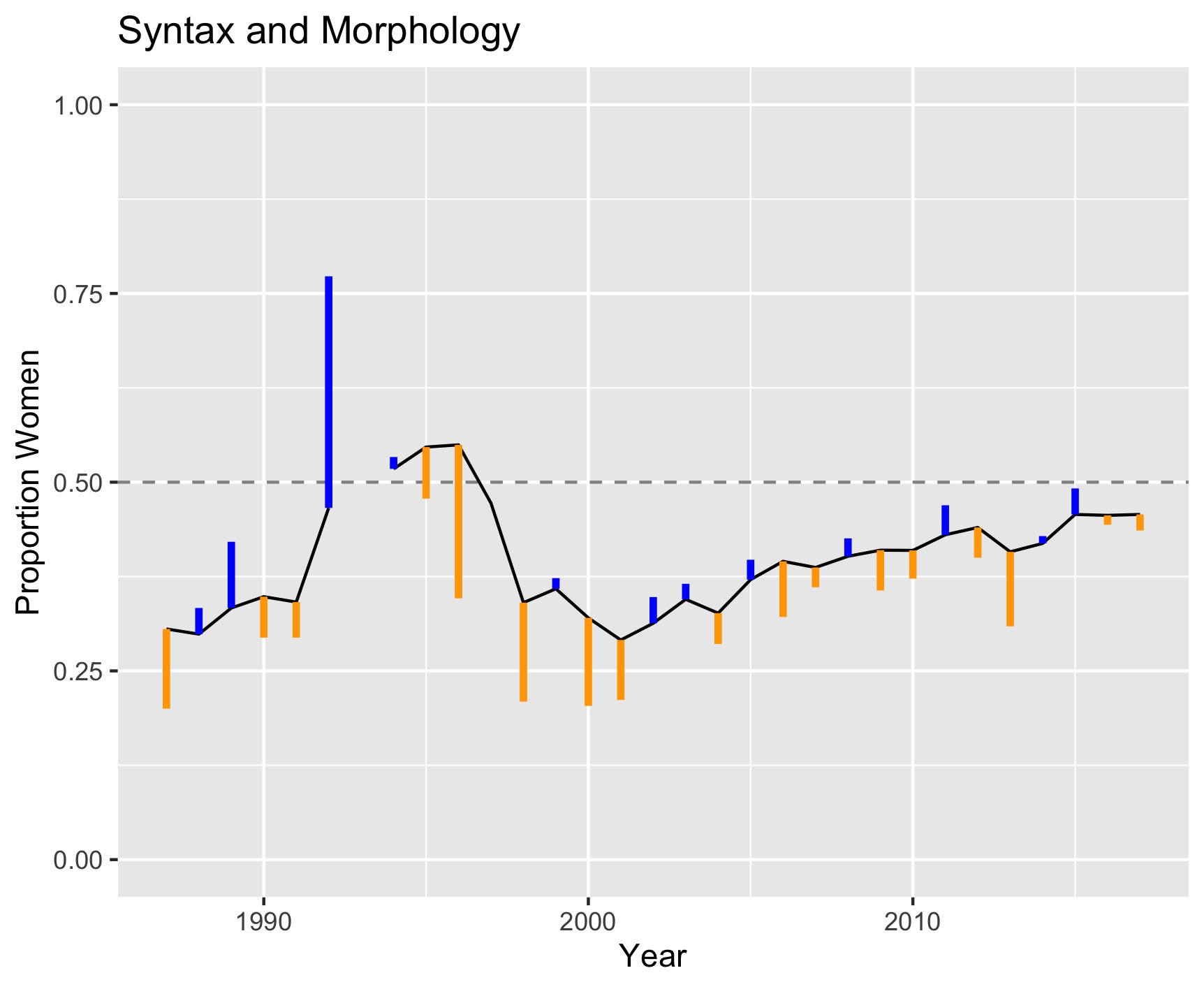

Syntax/Morphology

In Syntax and Morphology, women are under-represented, but the representation estimate is climbing toward parity.

We see somewhat more under-publishing than over-publishing, but this variation looks mostly random in the last 20 years.

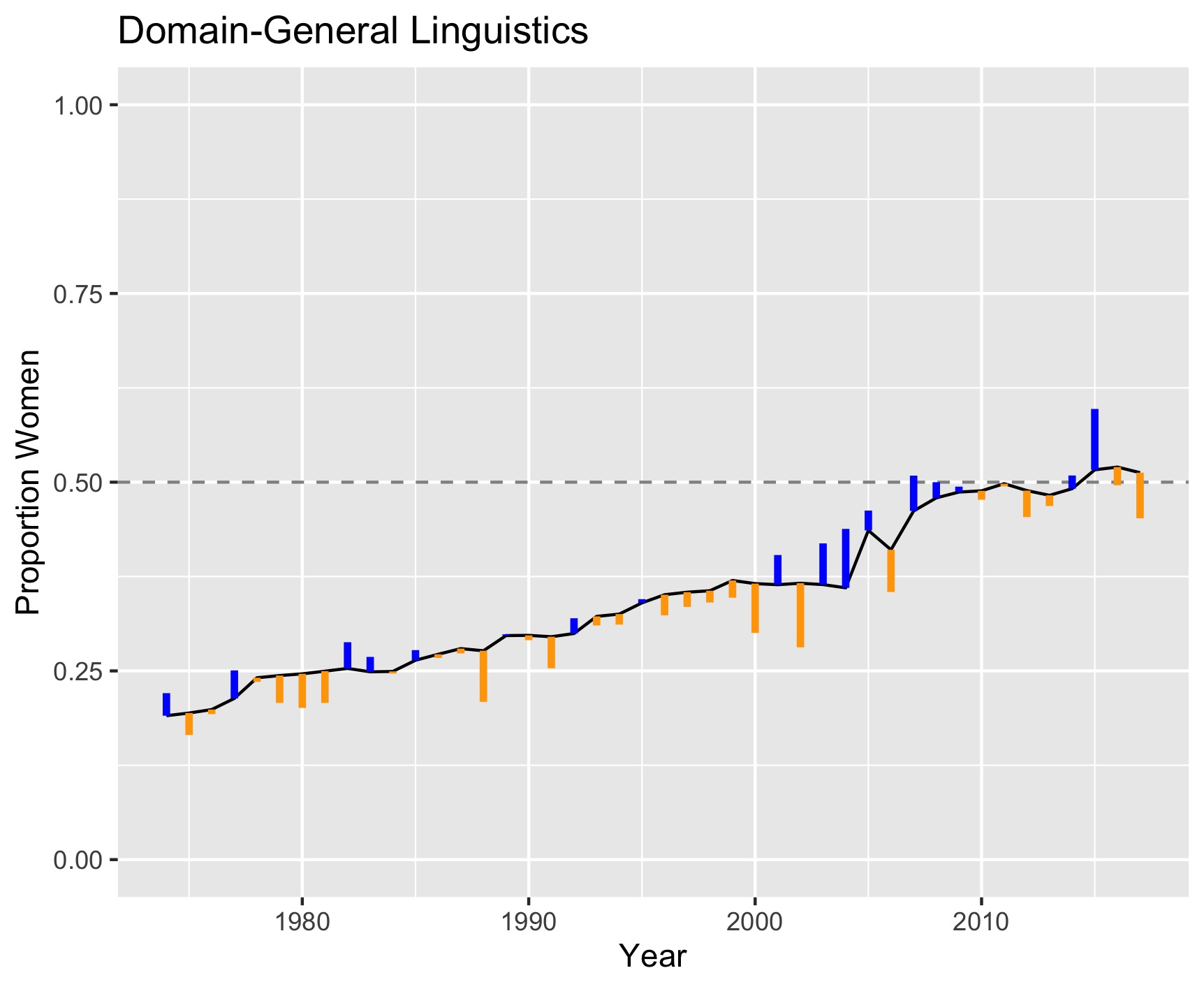

Domain-general

Finally, in journals that publish research across different sub-fields, we see that representation has recently reached parity.

There does not appear to be an issue with publication bias in these domain-general journals.

Single vs. Double-blind

If any of the deviations in publication rate that we observe are due to reviewer bias, it is possible that we would see a difference between journals with single and double-blind reviewing processes. When we split our data by this factor, we do not observe any clear differences.

This does not necessarily mean that reviewer bias does not have influence, since it could also be that double-blinding is ineffective.

Methods

We extracted all available citation data from 31 journals in the areas

of Syntax, Semantics, Phonology/Phonetics, Language Acquisition, and

Psycholinguistics, as well as more domain-general journals covering

multiple sub-fields. We did this using the R package rcrossref. Two

journals with a more broad focus were filtered to publications with at

least one author who had authored another publication in our dataset.

We automatically tagged author gender using genderizeR, which bases

its gender tags on first names. This method achieved 97% accuracy on a

hand-tagged subset of 744 linguists. We then had a dataset of 87,000

instances of gender-tagged authorship, averaging 1070 publications per

year.

Calculating authorship rates by gender would not be meaningful without an estimate of representation of women in the field, since women could be under-represented but still publishing in proportion to their representation. We estimated representation in each year by taking the set of unique male or women authors who had published at least once in the previous five years.

Our code for this analysis is publicly available on GitLab.